Ceph (software): Difference between revisions

m →Design: native |

→External links: State of the Cephalopod (2023) |

||

| (40 intermediate revisions by 24 users not shown) | |||

| Line 1: | Line 1: | ||

{{Short description|Open-source storage platform}} |

{{Short description|Open-source storage platform}} |

||

{{About|the computer storage platform|other uses|Ceph (disambiguation)}} |

{{About|the computer storage platform|other uses|Ceph (disambiguation)}} |

||

{{primary sources|date=March 2018}} |

|||

{{Infobox software |

{{Infobox software |

||

| name = Ceph Storage |

| name = Ceph Storage |

||

| logo = [[File:Ceph logo. |

| logo = [[File:Ceph (software) logo.svg]] |

||

| author = [[Inktank Storage]] ([[Sage Weil]], Yehuda Sadeh Weinraub, Gregory Farnum, Josh Durgin, Samuel Just, Wido den Hollander) |

| author = [[Inktank Storage]] ([[Sage Weil]], Yehuda Sadeh Weinraub, Gregory Farnum, Josh Durgin, Samuel Just, Wido den Hollander) |

||

| developer = [[Red Hat]], [[Intel]], [[CERN]], [[Cisco]], [[Fujitsu]], [[SanDisk]], [[Canonical (company)|Canonical]] and [[SUSE]]<ref>{{cite web |

| developer = [[Red Hat]], [[Intel]], [[CERN]], [[Cisco]], [[Fujitsu]], [[SanDisk]], [[Canonical (company)|Canonical]] and [[SUSE S.A.|SUSE]]<ref>{{cite web |

||

| url = http://www.storagereview.com/ceph_community_forms_advisory_board |

| url = http://www.storagereview.com/ceph_community_forms_advisory_board |

||

| date = 2015-10-28 |

| date = 2015-10-28 |

||

| Line 21: | Line 20: | ||

| url = https://github.com/ceph/ceph/search?l=C%2B%2B |

| url = https://github.com/ceph/ceph/search?l=C%2B%2B |

||

| title = GitHub Repository| website = [[GitHub]]}}</ref> |

| title = GitHub Repository| website = [[GitHub]]}}</ref> |

||

| operating system = [[Linux]], [[FreeBSD]]<ref>{{cite web |

| operating system = [[Linux]], [[FreeBSD]],<ref name=freebsd>{{cite web |

||

| url = https://www.freebsd.org/news/status/report-2016-10-2016-12.html#Ceph-on-FreeBSD |

| url = https://www.freebsd.org/news/status/report-2016-10-2016-12.html#Ceph-on-FreeBSD |

||

| title = FreeBSD Quarterly Status Report}}</ref> |

| title = FreeBSD Quarterly Status Report}}</ref> [[Windows]]<ref name=windows/> |

||

| genre = Distributed object store |

| genre = Distributed object store |

||

| license = [[GNU Lesser General Public License|LGPLv2.1]]<ref>{{cite web |

| license = [[GNU Lesser General Public License|LGPLv2.1]]<ref>{{cite web |

||

| Line 34: | Line 33: | ||

}} |

}} |

||

'''Ceph''' (pronounced {{IPAc-en|ˈ|s|ɛ|f}}) is |

'''Ceph''' (pronounced {{IPAc-en|ˈ|s|ɛ|f}}) is a [[Free software|free]] and [[open-source software|open-source]] software-defined [[Computer data storage|storage]] [[computing platform|platform]] that provides [[object storage]],<ref>{{Cite news| last=Nicolas|first=Philippe|date=2016-07-15|title=The History Boys: Object storage ... from the beginning|language=en| work=The Register| url=https://www.theregister.co.uk/2016/07/15/the_history_boys_cas_and_object_storage_map/}}</ref> [[Block-level_storage|block storage]], and [[Network-attached_storage|file storage]] built on a common [[computer cluster|distributed]] cluster foundation. Ceph provides completely distributed operation without a [[single point of failure]] and scalability to the [[exabyte]] level, and is freely available. Since version 12 (Luminous), Ceph does not rely on any other conventional filesystem and directly manages [[Hard disk drive|HDD]]s and [[Solid-state drive|SSD]]s with its own storage backend BlueStore and can expose a [[POSIX]] [[filesystem]]. |

||

Ceph [[replication (computer science)|replicates]] data |

Ceph [[replication (computer science)|replicates]] data with [[fault tolerance]],<ref name=kerneltrap>{{cite web |date= 2007-11-15 |author= Jeremy Andrews |title= Ceph Distributed Network File System |url= http://kerneltrap.org/Linux/Ceph_Distributed_Network_File_System |publisher= [[KernelTrap]] |access-date= 2007-11-15 |archive-url= https://web.archive.org/web/20071117102035/http://kerneltrap.org/Linux/Ceph_Distributed_Network_File_System |archive-date= 2007-11-17 |url-status= dead}}</ref> using [[commodity hardware]] and Ethernet IP and requiring no specific hardware support. Ceph is [[High_availability|highly available]] and ensures strong data durability through techniques including replication, [[Erasure_code|erasure coding]], snapshots and clones. By design, the system is both self-healing and [[self-management (computer science)|self-managing]], minimizing administration time and other costs. |

||

| ⚫ | Large-scale production Ceph deployments include [[CERN]],<ref>{{cite web |title=Ceph Clusters |url=https://cephdocs.s3-website.cern.ch/ops/clusters/index.html |website=CERN |access-date=12 November 2022}}</ref><ref>{{cite web |title=Ceph Operations at CERN: Where Do We Go From Here? - Dan van der Ster & Teo Mouratidis, CERN |url=https://www.youtube.com/watch?v=0i7ew3XXb7Q |website=YouTube |date=24 May 2019 |access-date=12 November 2022}}</ref> [[OVH]]<ref>{{cite web |last1=Dorosz |first1=Filip |title=Journey to next-gen Ceph storage at OVHcloud with LXD |url=https://blog.ovhcloud.com/journey-to-next-gen-ceph-storage-at-ovhcloud-with-lxd/ |website=OVHcloud |date=15 June 2020 |access-date=12 November 2022}}</ref><ref>{{cite web |title=CephFS distributed filesystem |url=https://docs.ovh.com/us/en/storage/block-storage/ceph/cephfs/ |website=OVHcloud |access-date=12 November 2022}}</ref><ref>{{cite web |title=Ceph - Distributed Storage System in OVH [en] - Bartłomiej Święcki |url=https://www.youtube.com/watch?v=rmgYvRwDf4A |website=YouTube |date=7 April 2016 |access-date=12 November 2022}}</ref><ref>{{cite web |title=200 Clusters vs 1 Admin - Bartosz Rabiega, OVH |url=https://www.youtube.com/watch?v=yuFnelsxP_c |website=YouTube |date=24 May 2019 |access-date=15 November 2022}}</ref> and [[DigitalOcean]].<ref>{{cite web |last1=D'Atri |first1=Anthony |title=Why We Chose Ceph to Build Block Storage |url=https://www.digitalocean.com/blog/why-we-chose-ceph-to-build-block-storage |website=DigitalOcean |date=31 May 2018 |access-date=12 November 2022}}</ref><ref>{{cite web |title=Ceph Tech Talk: Ceph at DigitalOcean |url=https://www.youtube.com/watch?v=k_bTg72eOhU |website=YouTube |date=7 October 2021 |access-date=12 November 2022}}</ref> |

||

In this way, administrators have a single, consolidated system that collects the storage within a common management framework. |

|||

Ceph consolidates several storage use cases and improves resource utilization. It also lets an organization deploy servers where needed.{{citation needed|date=October 2022}} |

|||

| ⚫ | |||

==Design== |

==Design== |

||

| Line 55: | Line 51: | ||

}}</ref> |

}}</ref> |

||

* Cluster monitors ({{Mono|ceph-mon}}) that keep track of active and failed cluster nodes, cluster configuration, and information about data placement and global cluster state. |

* Cluster monitors ({{Mono|ceph-mon}}) that keep track of active and failed cluster nodes, cluster configuration, and information about data placement and global cluster state. |

||

* [[Object storage device]]s ({{Mono|ceph-osd}}) that |

* [[Object storage device|OSD]]s ({{Mono|ceph-osd}}) that manage bulk data storage devices directly via the BlueStore back end,<ref name="bluestore">{{cite web | url=http://docs.ceph.com/docs/master/rados/configuration/storage-devices/#bluestore | title=BlueStore | accessdate=2017-09-29 | publisher=Ceph}}</ref> which since the v12.x release replaces the Filestore<ref>{{cite web|url=https://docs.ceph.com/docs/mimic/rados/operations/bluestore-migration/|title=BlueStore Migration|accessdate=2020-04-12|archive-date=2019-12-04|archive-url=https://web.archive.org/web/20191204094405/https://docs.ceph.com/docs/mimic/rados/operations/bluestore-migration/|url-status=dead}}</ref> back end, which was implemented on top of a traditional filesystem) |

||

* [[Metadata]] servers ({{Mono|ceph-mds}}) that |

* [[Metadata]] servers ({{Mono|ceph-mds}}) that maintain and broker access to [[inode]]s and [[directory (file systems)|directories]] inside a CephFS filesystem |

||

* [[HTTP]] gateways ({{Mono|ceph-rgw}}) that expose the object storage layer as an interface compatible with [[Amazon S3]] or [[Openstack#Object storage (Swift)|OpenStack Swift]] APIs |

* [[HTTP]] gateways ({{Mono|ceph-rgw}}) that expose the object storage layer as an interface compatible with [[Amazon S3]] or [[Openstack#Object storage (Swift)|OpenStack Swift]] APIs |

||

* Managers ({{Mono|ceph-mgr}}) that perform cluster monitoring, bookkeeping, and maintenance tasks, and interface to external monitoring systems and management (e.g. balancer, dashboard, [[Prometheus (software)|Prometheus]], Zabbix plugin)<ref>{{cite web|url=http://docs.ceph.com/docs/mimic/mgr/|archive-url=https://web.archive.org/web/20180606153846/http://docs.ceph.com/docs/mimic/mgr/|url-status=dead|archive-date=June 6, 2018|title=Ceph Manager Daemon — Ceph Documentation|website=docs.ceph.com|access-date=2019-01-31}} |

* Managers ({{Mono|ceph-mgr}}) that perform cluster monitoring, bookkeeping, and maintenance tasks, and interface to external monitoring systems and management (e.g. balancer, dashboard, [[Prometheus (software)|Prometheus]], Zabbix plugin)<ref>{{cite web|url=http://docs.ceph.com/docs/mimic/mgr/|archive-url=https://web.archive.org/web/20180606153846/http://docs.ceph.com/docs/mimic/mgr/|url-status=dead|archive-date=June 6, 2018|title=Ceph Manager Daemon — Ceph Documentation|website=docs.ceph.com|access-date=2019-01-31}} |

||

[https://docs.ceph.com/docs/mimic/mgr/ archive link] {{Webarchive|url=https://web.archive.org/web/20200619033017/https://docs.ceph.com/docs/mimic/mgr/ |date=June 19, 2020 }}</ref> |

[https://docs.ceph.com/docs/mimic/mgr/ archive link] {{Webarchive|url=https://web.archive.org/web/20200619033017/https://docs.ceph.com/docs/mimic/mgr/ |date=June 19, 2020 }}</ref> |

||

All of these are fully distributed, and may |

All of these are fully distributed, and may be deployed on disjoint, dedicated servers or in a [[Hyper-converged_infrastructure|converged topology]]. Clients with different needs directly interact with appropriate cluster components.<ref name=lwn>{{cite web |date=2007-11-14 |author=Jake Edge |title=The Ceph filesystem |url=https://lwn.net/Articles/258516/ |publisher=[[LWN.net]]}}</ref> |

||

Ceph |

Ceph [[striping|distributes]] data across multiple storage devices and nodes to achieve higher throughput, in a fashion similar to [[RAID]]. Adaptive [[load balancing (computing)|load balancing]] is supported whereby frequently accessed services may be replicated over more nodes.<ref name=lcse>{{cite web|date=2017-10-01|author=Anthony D'Atri, Vaibhav Bhembre|title=Learning Ceph, Second Edition|url=https://www.packtpub.com/product/learning-ceph-second-edition/9781787127913|publisher=[[Packt]]}}</ref> |

||

| ⚫ | {{As of|2017|9}}, BlueStore is the default and recommended storage |

||

| ⚫ | {{As of|2017|9}}, BlueStore is the default and recommended storage back end for production environments,<ref name=luminous>{{cite web|date=2017-08-29 |author = Sage Weil |title=v12.2.0 Luminous Released|url=http://ceph.com/releases/v12-2-0-luminous-released/ |publisher=Ceph Blog}}</ref> which provides better latency and configurability than the older Filestore back end, and avoiding the shortcomings of filesystem based storage involving additional processing and caching layers. The Filestore back end will be deprecated as of the Reef release in mid 2023. [[XFS]] was the recommended underlying filesystem for Filestore OSDs, and [[Btrfs]] could be used at one's own risk. [[ext4]] filesystems were not recommended due to limited metadata capacity.<ref>{{cite web|title=Hard Disk and File System Recommendations|url=http://docs.ceph.com/docs/master/rados/configuration/filesystem-recommendations/|publisher=ceph.com|accessdate=2017-06-26|archive-url=https://web.archive.org/web/20170714142019/http://docs.ceph.com/docs/master/rados/configuration/filesystem-recommendations/|archive-date=2017-07-14|url-status=dead}}</ref> The BlueStore back end does still use XFS for a small metadata partition.<ref>{{cite web|url=https://docs.ceph.com/docs/mimic/rados/configuration/bluestore-config-ref/|title=BlueStore Config Reference|accessdate=April 12, 2020|archive-date=July 20, 2019|archive-url=https://web.archive.org/web/20190720185522/http://docs.ceph.com/docs/mimic/rados/configuration/bluestore-config-ref/|url-status=dead}}</ref> |

||

| ⚫ | |||

{{Anchor|RADOS}} |

{{Anchor|RADOS}} |

||

===Object storage S3=== |

===Object storage S3=== |

||

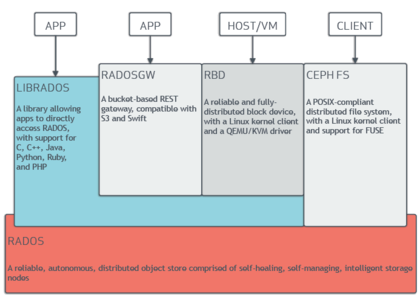

[[File:Ceph stack.png|thumb|right|upright=1.9|An architecture diagram showing the relations |

[[File:Ceph stack.png|thumb|right|upright=1.9|An architecture diagram showing the relations among components of the Ceph storage platform]] |

||

Ceph implements distributed [[object storage]] |

Ceph implements distributed [[object storage]] via the |

||

RADOS |

RADOS GateWay ({{Mono|ceph-rgw}}), which exposes the underlying storage layer via an interface compatible with [[Amazon S3]] or [[Openstack#Object storage (Swift)|OpenStack Swift]]. |

||

Ceph RGW deployments scale readily and often utilize large and dense storage media for bulk use cases that include [[Big data|Big Data]] (datalake), [[Backup|backups]] & [[archives]], [[Internet of things|IOT]], media, video recording, and deployment images for [[Virtual_machine|virtual machines]] and [[Containerization_(computing)|containers]].<ref>{{Cite web |date=2023-07-03 |title=10th International Conference "Distributed Computing and Grid Technologies in Science and Education" (GRID'2023) |url=https://indico.jinr.ru/event/3505/timetable/?view=standard |access-date=2023-08-09 |website=JINR (Indico)}}</ref> |

|||

These are often capacitive disks which are associated with Ceph's S3 object storage for use cases: [[Big data|Big Data]] (datalake), Backup & Archives, [[Internet of things|IOT]], media, video recording, etc. |

|||

Ceph's software libraries provide client applications with direct access to the ''reliable autonomic distributed object store'' (RADOS) object-based storage system |

Ceph's software libraries provide client applications with direct access to the ''reliable autonomic distributed object store'' (RADOS) object-based storage system. More frequently used are libraries for Ceph's ''RADOS Block Device'' (RBD), ''RADOS Gateway'', and ''Ceph File System'' services. In this way, administrators can maintain their storage devices within a unified system, which makes it easier to replicate and protect the data. |

||

The "librados" [[software libraries]] provide access in [[C (programming language)|C]], [[C++]], [[Java (programming language)|Java]], [[PHP]], and [[Python (programming language)|Python]]. The RADOS Gateway also exposes the object store as a [[REST]]ful interface which can present as both native [[Amazon S3]] and [[Openstack#Object storage (Swift)|OpenStack Swift]] APIs. |

The "librados" [[software libraries]] provide access in [[C (programming language)|C]], [[C++]], [[Java (programming language)|Java]], [[PHP]], and [[Python (programming language)|Python]]. The RADOS Gateway also exposes the object store as a [[REST]]ful interface which can present as both native [[Amazon S3]] and [[Openstack#Object storage (Swift)|OpenStack Swift]] APIs. |

||

| Line 85: | Line 80: | ||

===Block storage=== |

===Block storage=== |

||

Ceph |

Ceph can provide clients with [[Thin provisioning|thin-provisioned]] [[block device]]s. When an application writes data to Ceph using a block device, Ceph automatically stripes and replicates the data across the cluster. Ceph's ''RADOS Block Device'' (RBD) also integrates with [[Kernel-based Virtual Machine]]s (KVMs). |

||

Ceph block storage may be deployed on traditional HDDs and/or [[Solid-state_drive|SSDs]] which are associated with Ceph's block storage for use cases, including databases, virtual machines, data analytics, artificial intelligence, and machine learning. Block storage clients often require high [[Hard_disk_drive_performance_characteristics#Data_transfer_rate|throughput]] and [[IOPS]], thus Ceph RBD deployments increasingly utilize SSDs with [[NVM_Express|NVMe]] interfaces. |

|||

" |

"RBD" is built on with Ceph's foundational RADOS object storage system that provides the librados interface and the CephFS file system. Since RBD is built on librados, RBD inherits librados's abilities, including clones and [[Snapshot_(computer_storage)|snapshots]]. By striping volumes across the cluster, Ceph improves performance for large block device images. |

||

"Ceph-iSCSI" is a gateway which enables access to distributed, highly available block storage from |

"Ceph-iSCSI" is a gateway which enables access to distributed, highly available block storage from [[Microsoft Windows]] and [[VMware vSphere]] servers or clients capable of speaking the [[iSCSI]] protocol. By using ceph-iscsi on one or more iSCSI gateway hosts, Ceph RBD images become available as Logical Units (LUs) associated with iSCSI targets, which can be accessed in an optionally load-balanced, highly available fashion. |

||

Since |

Since ceph-iscsi configuration is stored in the Ceph RADOS object store, ceph-iscsi gateway hosts are inherently without persistent state and thus can be replaced, augmented, or reduced at will. As a result, Ceph Storage enables customers to run a truly distributed, highly-available, resilient, and self-healing enterprise storage technology on commodity hardware and an entirely open source platform. |

||

The block device can be virtualized, providing block storage to virtual machines, in virtualization platforms such as [[Openshift]], [[OpenStack]], [[Kubernetes]], [[OpenNebula]], [[Ganeti]], [[Apache CloudStack]] and [[Proxmox Virtual Environment]]. |

The block device can be virtualized, providing block storage to virtual machines, in virtualization platforms such as [[Openshift]], [[OpenStack]], [[Kubernetes]], [[OpenNebula]], [[Ganeti]], [[Apache CloudStack]] and [[Proxmox Virtual Environment]]. |

||

| Line 99: | Line 94: | ||

{{Anchor|CephFS}} |

{{Anchor|CephFS}} |

||

===File |

===File storage=== |

||

Ceph's file system (CephFS) runs on top of the same |

Ceph's file system (CephFS) runs on top of the same RADOS foundation as Ceph's object storage and block device services. The CephFS metadata server (MDS) provides a service that maps the directories and file names of the file system to objects stored within RADOS clusters. The metadata server cluster can expand or contract, and it can rebalance file system metadata ranks dynamically to distribute data evenly among cluster hosts. This ensures high performance and prevents heavy loads on specific hosts within the cluster. |

||

Clients mount the [[POSIX]]-compatible file system using a [[Linux kernel]] client. An older [[Filesystem in Userspace|FUSE]]-based client is also available. The servers run as regular Unix [[daemon (computer software)|daemons]]. |

Clients mount the [[POSIX]]-compatible file system using a [[Linux kernel]] client. An older [[Filesystem in Userspace|FUSE]]-based client is also available. The servers run as regular Unix [[daemon (computer software)|daemons]]. |

||

| Line 108: | Line 103: | ||

=== Dashboard === |

=== Dashboard === |

||

[[File:Ceph Dashboard landing page.webp|thumb|Ceph Dashboard landing page (2023)]] |

[[File:Ceph Dashboard landing page.webp|thumb|Ceph Dashboard landing page (2023)]] |

||

From 2018 there is also a Dashboard UI project, which helps to manage the cluster. It's |

From 2018 there is also a Dashboard web UI project, which helps to manage the cluster. It's being developed by Ceph community on LGPL-3 and uses Ceph-mgr, [[Python (programming language)|Python]], [[Angular (web framework)|Angular]] framework and [[Grafana]].<ref>{{cite web |title=Ceph Dashboard |url=https://docs.ceph.com/en/latest/mgr/dashboard/ |website=Ceph documentation |access-date=11 April 2023}}</ref> Landing page has been refreshed in the beginning of 2023.<ref>{{cite news |last1=Gomez |first1=Pedro Gonzalez |title=Introducing the new Dashboard Landing Page |url=https://ceph.io/en/news/blog/2023/landing-page/ |date=23 February 2023 |access-date=11 April 2023}}</ref> |

||

Previous dashboards were developed but are closed now: Calamari (2013–2018), OpenAttic (2013–2019), VSM (2014–2016), Inkscope (2015–2016) and Ceph-Dash (2015–2017).<ref>{{cite web |title=Operating Ceph from the Ceph Dashboard: past, present and future |url=https://www.youtube.com/watch?v=pDiugNJSZpk |website=YouTube |date=22 November 2022 |access-date=11 April 2023}}</ref> |

|||

=== Crimson === |

|||

| ⚫ | Beginning in 2019 the Crimson project has been reimplementing the OSD data path. The goal of Crimson is to minimize latency and CPU overhead. Modern storage devices and interfaces including [[NVMe]] and [[3D XPoint]] have become much faster than [[Hard disk drive|HDD]] and even SAS/SATA [[Solid-state drive|SSD]]s, but CPU performance has not kept pace. Moreover {{Mono|crimson-osd}} is meant to be a backward-compatible [[drop-in replacement]] for {{Mono|ceph-osd}}. While Crimson can work with the BlueStore back end (via AlienStore), a new native ObjectStore implementation called SeaStore is also being developed along with CyanStore for testing purposes. One reason for creating SeaStore is that transaction support in the BlueStore back end is provided by [[RocksDB]], which needs to be re-implemented to achieve better parallelism.<ref>{{cite web |last1=Just |first1=Sam |title=Crimson: evolving Ceph for high performance NVMe |url=https://next.redhat.com/2021/01/18/crimson-evolving-ceph-for-high-performance-nvme/ |website=Red Hat Emerging Technologies |date=18 January 2021 |access-date=12 November 2022}}</ref><ref>{{cite web |last1=Just |first1=Samuel |title=What's new with Crimson and Seastore? |url=https://www.youtube.com/watch?v=vc5w2mn93cY |website=YouTube |date=10 November 2022 |access-date=12 November 2022}}</ref><ref>{{cite web |title=Crimson: Next-generation Ceph OSD for Multi-core Scalability |url=https://ceph.io/en/news/blog/2023/crimson-multi-core-scalability/ |website=Ceph blog |publisher=Ceph |date=7 February 2023 |access-date=11 April 2023}}</ref> |

||

==History== |

==History== |

||

Ceph was |

Ceph was created by [[Sage Weil]] for his [[doctoral dissertation]],<ref name=thesis>{{cite web |date=2007-12-01 |author=Sage Weil |title=Ceph: Reliable, Scalable, and High-Performance Distributed Storage |url=https://ceph.com/wp-content/uploads/2016/08/weil-thesis.pdf |publisher=[[University of California, Santa Cruz]] |access-date=2017-03-11 |archive-date=2017-07-06 |archive-url=https://web.archive.org/web/20170706201040/https://ceph.com/wp-content/uploads/2016/08/weil-thesis.pdf |url-status=dead }}</ref> which was advised by Professor Scott A. Brandt at the [[Jack Baskin School of Engineering]], [[University of California, Santa Cruz]] (UCSC), and sponsored by the [[Advanced Simulation and Computing Program]] (ASC), including [[Los Alamos National Laboratory]] (LANL), [[Sandia National Laboratories]] (SNL), and [[Lawrence Livermore National Laboratory]] (LLNL).<ref>{{cite web |

||

| title = The ASCI/DOD Scalable I/O History and Strategy |

| title = The ASCI/DOD Scalable I/O History and Strategy |

||

| author = Gary Grider |

| author = Gary Grider |

||

| Line 126: | Line 124: | ||

In April 2014, [[Red Hat]] purchased Inktank, bringing the majority of Ceph development in-house to make it a production version for enterprises with support (hotline) and continuous maintenance (new versions).<ref name=redhatacquisition>{{cite web |date=2014-04-30 |accessdate=2014-08-19 |author = Red Hat Inc |title=Red Hat to Acquire Inktank, Provider of Ceph |url=http://www.redhat.com/en/about/press-releases/red-hat-acquire-inktank-provider-ceph |publisher=Red Hat}}</ref> |

In April 2014, [[Red Hat]] purchased Inktank, bringing the majority of Ceph development in-house to make it a production version for enterprises with support (hotline) and continuous maintenance (new versions).<ref name=redhatacquisition>{{cite web |date=2014-04-30 |accessdate=2014-08-19 |author = Red Hat Inc |title=Red Hat to Acquire Inktank, Provider of Ceph |url=http://www.redhat.com/en/about/press-releases/red-hat-acquire-inktank-provider-ceph |publisher=Red Hat}}</ref> |

||

In October 2015, the Ceph Community Advisory Board was formed to assist the community in driving the direction of open source software-defined storage technology. The charter advisory board includes Ceph community members from global IT organizations that are committed to the Ceph project, including individuals from [[Red Hat]], [[Intel]], [[Canonical (company)|Canonical]], [[CERN]], [[Cisco]], [[Fujitsu]], [[SanDisk]], and [[SUSE]].<ref name=advisoryboardformed>{{cite web|url=http://www.storagereview.com/ceph_community_forms_advisory_board|date=2015-10-28|accessdate=2016-01-20|title=Ceph Community Forms Advisory Board|archive-url=https://web.archive.org/web/20190129064135/https://www.storagereview.com/ceph_community_forms_advisory_board|archive-date=2019-01-29|url-status=dead}}</ref> |

In October 2015, the Ceph Community Advisory Board was formed to assist the community in driving the direction of open source software-defined storage technology. The charter advisory board includes Ceph community members from global IT organizations that are committed to the Ceph project, including individuals from [[Red Hat]], [[Intel]], [[Canonical (company)|Canonical]], [[CERN]], [[Cisco]], [[Fujitsu]], [[SanDisk]], and [[SUSE S.A.|SUSE]].<ref name=advisoryboardformed>{{cite web|url=http://www.storagereview.com/ceph_community_forms_advisory_board|date=2015-10-28|accessdate=2016-01-20|title=Ceph Community Forms Advisory Board|archive-url=https://web.archive.org/web/20190129064135/https://www.storagereview.com/ceph_community_forms_advisory_board|archive-date=2019-01-29|url-status=dead}}</ref> |

||

In November 2018, the Linux Foundation launched the Ceph Foundation as a successor to the Ceph Community Advisory Board. Founding members of the Ceph Foundation included Amihan, [[Canonical (company)|Canonical]], [[China Mobile]], [[DigitalOcean]], [[Intel]], [[OVH]], ProphetStor Data Services, [[Red Hat]], SoftIron, [[SUSE]], [[Western Digital]], XSKY Data Technology, and [[ZTE]].<ref>{{cite web|url=https://www.linuxfoundation.org/en/press-release/the-linux-foundation-launches-ceph-foundation/ | |

In November 2018, the Linux Foundation launched the Ceph Foundation as a successor to the Ceph Community Advisory Board. Founding members of the Ceph Foundation included Amihan, [[Canonical (company)|Canonical]], [[China Mobile]], [[DigitalOcean]], [[Intel]], [[OVH]], ProphetStor Data Services, [[Red Hat]], SoftIron, [[SUSE S.A.|SUSE]], [[Western Digital]], XSKY Data Technology, and [[ZTE]].<ref>{{cite web |url=https://www.linuxfoundation.org/en/press-release/the-linux-foundation-launches-ceph-foundation/ |date=2018-11-12 |title=The Linux Foundation Launches Ceph Foundation To Advance Open Source Storage }}{{Dead link|date=November 2023 |bot=InternetArchiveBot |fix-attempted=yes }}</ref> |

||

In March 2021, SUSE discontinued its Enterprise Storage product incorporating Ceph in favor of Longhorn |

In March 2021, SUSE discontinued its Enterprise Storage product incorporating Ceph in favor of [[Rancher Labs|Rancher]]'s Longhorn,<ref>{{cite web|url=https://www.theregister.com/2021/03/25/suse_kisses_ceph_goodbye/ | title=SUSE says tschüss to Ceph-based enterprise storage product – it's Rancher's Longhorn from here on out}}</ref> and the former Enterprise Storage website was updated stating "SUSE has refocused the storage efforts around serving our strategic SUSE Enterprise Storage Customers and are no longer actively selling SUSE Enterprise Storage."<ref>{{cite web|url=https://www.suse.com/products/suse-enterprise-storage/ |title=SUSE Enterprise Software-Defined Storage}}</ref> |

||

=== Release history === |

=== Release history === |

||

| Line 171: | Line 169: | ||

| November 9, 2013 |

| November 9, 2013 |

||

| May 2014 |

| May 2014 |

||

| multi-datacenter replication for |

| multi-datacenter replication for RGW |

||

|- |

|- |

||

| Firefly |

| Firefly |

||

| Line 177: | Line 175: | ||

| May 7, 2014 |

| May 7, 2014 |

||

| April 2016 |

| April 2016 |

||

| erasure coding, cache tiering, primary affinity, key/value OSD backend (experimental), standalone |

| erasure coding, cache tiering, primary affinity, key/value OSD backend (experimental), standalone RGW (experimental) |

||

|- |

|- |

||

| Giant |

| Giant |

||

| Line 201: | Line 199: | ||

| April 21, 2016 |

| April 21, 2016 |

||

| 2018-06-01 |

| 2018-06-01 |

||

| Stable CephFS, experimental |

| Stable CephFS, experimental OSD back end named BlueStore, daemons no longer run as the root user |

||

|- |

|- |

||

| Kraken |

| Kraken |

||

| Line 207: | Line 205: | ||

| January 20, 2017 |

| January 20, 2017 |

||

| 2017-08-01 |

| 2017-08-01 |

||

| BlueStore is stable |

| BlueStore is stable, EC for RBD pools |

||

|- |

|- |

||

| Luminous |

| Luminous |

||

| Line 213: | Line 211: | ||

| August 29, 2017 |

| August 29, 2017 |

||

| 2020-03-01 |

| 2020-03-01 |

||

| pg-upmap balancer |

|||

| ⚫ | |||

|- |

|- |

||

| Mimic |

| Mimic |

||

| Line 219: | Line 217: | ||

| June 1, 2018 |

| June 1, 2018 |

||

| 2020-07-22 |

| 2020-07-22 |

||

| snapshots are stable, Beast is stable |

| snapshots are stable, Beast is stable, official GUI (Dashboard) |

||

|- |

|- |

||

| Nautilus |

| Nautilus |

||

| Line 225: | Line 223: | ||

| March 19, 2019 |

| March 19, 2019 |

||

| 2021-06-01 |

| 2021-06-01 |

||

| asynchronous replication, auto-retry of failed writes due to grown defect remapping |

|||

| ⚫ | |||

|- |

|- |

||

| Octopus |

| Octopus |

||

| Line 234: | Line 232: | ||

|- |

|- |

||

| Pacific |

| Pacific |

||

| {{Version | |

| {{Version |o |16.2.0 }} |

||

| March 31, 2021<ref>[https://ceph.io/releases/v16-2-0-pacific-released/ Ceph.io — v16.2.0 Pacific released<!-- Bot generated title -->]</ref> |

| March 31, 2021<ref>[https://ceph.io/releases/v16-2-0-pacific-released/ Ceph.io — v16.2.0 Pacific released<!-- Bot generated title -->]</ref> |

||

| 2023-06-01 |

| 2023-06-01 |

||

| Line 240: | Line 238: | ||

|- |

|- |

||

| Quincy |

| Quincy |

||

| {{Version | |

| {{Version |co |17.2.0 }} |

||

| April 19, 2022<ref>[https://ceph.com/en/news/blog/2022/v17-2-0-quincy-released/ Ceph.io — v17.2.0 Quincy released]</ref> |

| April 19, 2022<ref>[https://ceph.com/en/news/blog/2022/v17-2-0-quincy-released/ Ceph.io — v17.2.0 Quincy released]</ref> |

||

| 2024-06-01 |

| 2024-06-01 |

||

| auto-setting of min_alloc_size for novel media |

|||

| ⚫ | |||

| Reef |

|||

| {{Version |c |18.2.0 }} |

|||

| ⚫ | |||

| ⚫ | |||

| |

| |

||

|- |

|- |

||

| Squid |

|||

| ⚫ | |||

| {{Version |p |TBA }} |

| {{Version |p |TBA }} |

||

| TBA |

| TBA |

||

| Line 252: | Line 256: | ||

|} |

|} |

||

{{Version |l |show=111101}} |

{{Version |l |show=111101}} |

||

== Available platforms == |

|||

While basically built for Linux, Ceph has been also partially ported to Windows platform. It is production-ready for [[Windows Server 2016]] (some commands might be unavailable due to lack of [[UNIX socket]] implementation), [[Windows Server 2019]] and [[Windows Server 2022]], but testing/development can be done also on [[Windows 10]] and [[Windows 11]]. One can use Ceph RBD and CephFS on Windows, but OSD is not supported on this platform.<ref>{{cite web |title=Ceph for Windows |url=https://cloudbase.it/ceph-for-windows/ |publisher=Cloudbase Solutions |access-date=2 July 2023}}</ref><ref name=windows>{{cite web |title=Installing Ceph on Windows |url=https://docs.ceph.com/en/latest/install/windows-install/ |website=Ceph |access-date=2 July 2023}}</ref><ref>{{cite web |last1=Pilotti |first1=Alessandro |title=Ceph on Windows |url=https://www.youtube.com/watch?v=O2F7DWrESgw |website=YouTube |access-date=2 July 2023}}</ref> |

|||

There is also [[FreeBSD]] implementation of Ceph.<ref name=freebsd/> |

|||

==Etymology== |

==Etymology== |

||

The name "Ceph" is |

The name "Ceph" is a shortened form of "[[cephalopod]]", a class of [[Mollusca|molluscs]] that includes squids, cuttlefish, nautiloids, and octopuses. The name (emphasized by the logo) suggests the highly parallel behavior of an octopus and was chosen to associate the file system with "Sammy", the [[banana slug]] mascot of [[University of California, Santa Cruz|UCSC]].<ref name="ibm-developerworks"/> Both cephalopods and banana slugs are molluscs. |

||

==See also== |

==See also== |

||

| Line 324: | Line 333: | ||

{{Commons category|Ceph}} |

{{Commons category|Ceph}} |

||

* {{Official website|https://ceph.io/}} |

* {{Official website|https://ceph.io/}} |

||

* {{ |

* {{YouTube|KJkyV-fJvow|State of the Cephalopod 2022}} |

||

* {{YouTube|zmlFmS2b3wY|State of the Cephalopod}} (2023) |

|||

{{File systems}} |

{{File systems}} |

||

| Line 330: | Line 340: | ||

[[Category:Distributed file systems supported by the Linux kernel]] |

[[Category:Distributed file systems supported by the Linux kernel]] |

||

| ⚫ | |||

[[Category:Network file systems]] |

[[Category:Network file systems]] |

||

[[Category:Red Hat software]] |

[[Category:Red Hat software]] |

||

[[Category:Userspace file systems]] |

[[Category:Userspace file systems]] |

||

[[Category:Virtualization software for Linux]] |

[[Category:Virtualization software for Linux]] |

||

| ⚫ | |||

[[Category:Free software programmed in Python]] |

|||

[[Category:Software using the LGPL license]] |

|||

Latest revision as of 05:20, 1 June 2024

| Original author(s) | Inktank Storage (Sage Weil, Yehuda Sadeh Weinraub, Gregory Farnum, Josh Durgin, Samuel Just, Wido den Hollander) |

|---|---|

| Developer(s) | Red Hat, Intel, CERN, Cisco, Fujitsu, SanDisk, Canonical and SUSE[1] |

| Stable release | 18.2.0[2] |

| Repository | |

| Written in | C++, Python[3] |

| Operating system | Linux, FreeBSD,[4] Windows[5] |

| Typ | Distributed object store |

| License | LGPLv2.1[6] |

| Website | ceph |

Ceph (pronounced /ˈsɛf/) is a free and open-source software-defined storage platform that provides object storage,[7] block storage, and file storage built on a common distributed cluster foundation. Ceph provides completely distributed operation without a single point of failure and scalability to the exabyte level, and is freely available. Since version 12 (Luminous), Ceph does not rely on any other conventional filesystem and directly manages HDDs and SSDs with its own storage backend BlueStore and can expose a POSIX filesystem.

Ceph replicates data with fault tolerance,[8] using commodity hardware and Ethernet IP and requiring no specific hardware support. Ceph is highly available and ensures strong data durability through techniques including replication, erasure coding, snapshots and clones. By design, the system is both self-healing and self-managing, minimizing administration time and other costs.

Large-scale production Ceph deployments include CERN,[9][10] OVH[11][12][13][14] and DigitalOcean.[15][16]

Design

[edit]

Ceph employs five distinct kinds of daemons:[17]

- Cluster monitors (ceph-mon) that keep track of active and failed cluster nodes, cluster configuration, and information about data placement and global cluster state.

- OSDs (ceph-osd) that manage bulk data storage devices directly via the BlueStore back end,[18] which since the v12.x release replaces the Filestore[19] back end, which was implemented on top of a traditional filesystem)

- Metadata servers (ceph-mds) that maintain and broker access to inodes and directories inside a CephFS filesystem

- HTTP gateways (ceph-rgw) that expose the object storage layer as an interface compatible with Amazon S3 oder OpenStack Swift APIs

- Managers (ceph-mgr) that perform cluster monitoring, bookkeeping, and maintenance tasks, and interface to external monitoring systems and management (e.g. balancer, dashboard, Prometheus, Zabbix plugin)[20]

All of these are fully distributed, and may be deployed on disjoint, dedicated servers or in a converged topology. Clients with different needs directly interact with appropriate cluster components.[21]

Ceph distributes data across multiple storage devices and nodes to achieve higher throughput, in a fashion similar to RAID. Adaptive load balancing is supported whereby frequently accessed services may be replicated over more nodes.[22]

As of September 2017[update], BlueStore is the default and recommended storage back end for production environments,[23] which provides better latency and configurability than the older Filestore back end, and avoiding the shortcomings of filesystem based storage involving additional processing and caching layers. The Filestore back end will be deprecated as of the Reef release in mid 2023. XFS was the recommended underlying filesystem for Filestore OSDs, and Btrfs could be used at one's own risk. ext4 filesystems were not recommended due to limited metadata capacity.[24] The BlueStore back end does still use XFS for a small metadata partition.[25]

Object storage S3

[edit]

Ceph implements distributed object storage via the RADOS GateWay (ceph-rgw), which exposes the underlying storage layer via an interface compatible with Amazon S3 oder OpenStack Swift.

Ceph RGW deployments scale readily and often utilize large and dense storage media for bulk use cases that include Big Data (datalake), backups & archives, IOT, media, video recording, and deployment images for virtual machines and containers.[26]

Ceph's software libraries provide client applications with direct access to the reliable autonomic distributed object store (RADOS) object-based storage system. More frequently used are libraries for Ceph's RADOS Block Device (RBD), RADOS Gateway, and Ceph File System services. In this way, administrators can maintain their storage devices within a unified system, which makes it easier to replicate and protect the data.

The "librados" software libraries provide access in C, C++, Java, PHP, and Python. The RADOS Gateway also exposes the object store as a RESTful interface which can present as both native Amazon S3 and OpenStack Swift APIs.

Block storage

[edit]Ceph can provide clients with thin-provisioned block devices. When an application writes data to Ceph using a block device, Ceph automatically stripes and replicates the data across the cluster. Ceph's RADOS Block Device (RBD) also integrates with Kernel-based Virtual Machines (KVMs).

Ceph block storage may be deployed on traditional HDDs and/or SSDs which are associated with Ceph's block storage for use cases, including databases, virtual machines, data analytics, artificial intelligence, and machine learning. Block storage clients often require high throughput and IOPS, thus Ceph RBD deployments increasingly utilize SSDs with NVMe interfaces.

"RBD" is built on with Ceph's foundational RADOS object storage system that provides the librados interface and the CephFS file system. Since RBD is built on librados, RBD inherits librados's abilities, including clones and snapshots. By striping volumes across the cluster, Ceph improves performance for large block device images.

"Ceph-iSCSI" is a gateway which enables access to distributed, highly available block storage from Microsoft Windows and VMware vSphere servers or clients capable of speaking the iSCSI protocol. By using ceph-iscsi on one or more iSCSI gateway hosts, Ceph RBD images become available as Logical Units (LUs) associated with iSCSI targets, which can be accessed in an optionally load-balanced, highly available fashion.

Since ceph-iscsi configuration is stored in the Ceph RADOS object store, ceph-iscsi gateway hosts are inherently without persistent state and thus can be replaced, augmented, or reduced at will. As a result, Ceph Storage enables customers to run a truly distributed, highly-available, resilient, and self-healing enterprise storage technology on commodity hardware and an entirely open source platform.

The block device can be virtualized, providing block storage to virtual machines, in virtualization platforms such as Openshift, OpenStack, Kubernetes, OpenNebula, Ganeti, Apache CloudStack and Proxmox Virtual Environment.

File storage

[edit]Ceph's file system (CephFS) runs on top of the same RADOS foundation as Ceph's object storage and block device services. The CephFS metadata server (MDS) provides a service that maps the directories and file names of the file system to objects stored within RADOS clusters. The metadata server cluster can expand or contract, and it can rebalance file system metadata ranks dynamically to distribute data evenly among cluster hosts. This ensures high performance and prevents heavy loads on specific hosts within the cluster.

Clients mount the POSIX-compatible file system using a Linux kernel client. An older FUSE-based client is also available. The servers run as regular Unix daemons.

Ceph's file storage is often associated with log collection, messaging, and file storage.

Dashboard

[edit]

From 2018 there is also a Dashboard web UI project, which helps to manage the cluster. It's being developed by Ceph community on LGPL-3 and uses Ceph-mgr, Python, Angular framework and Grafana.[27] Landing page has been refreshed in the beginning of 2023.[28]

Previous dashboards were developed but are closed now: Calamari (2013–2018), OpenAttic (2013–2019), VSM (2014–2016), Inkscope (2015–2016) and Ceph-Dash (2015–2017).[29]

Crimson

[edit]Beginning in 2019 the Crimson project has been reimplementing the OSD data path. The goal of Crimson is to minimize latency and CPU overhead. Modern storage devices and interfaces including NVMe and 3D XPoint have become much faster than HDD and even SAS/SATA SSDs, but CPU performance has not kept pace. Moreover crimson-osd is meant to be a backward-compatible drop-in replacement for ceph-osd. While Crimson can work with the BlueStore back end (via AlienStore), a new native ObjectStore implementation called SeaStore is also being developed along with CyanStore for testing purposes. One reason for creating SeaStore is that transaction support in the BlueStore back end is provided by RocksDB, which needs to be re-implemented to achieve better parallelism.[30][31][32]

History

[edit]Ceph was created by Sage Weil for his doctoral dissertation,[33] which was advised by Professor Scott A. Brandt at the Jack Baskin School of Engineering, University of California, Santa Cruz (UCSC), and sponsored by the Advanced Simulation and Computing Program (ASC), including Los Alamos National Laboratory (LANL), Sandia National Laboratories (SNL), and Lawrence Livermore National Laboratory (LLNL).[34] The first line of code that ended up being part of Ceph was written by Sage Weil in 2004 while at a summer internship at LLNL, working on scalable filesystem metadata management (known today as Ceph's MDS).[35] In 2005, as part of a summer project initiated by Scott A. Brandt and led by Carlos Maltzahn, Sage Weil created a fully functional file system prototype which adopted the name Ceph. Ceph made its debut with Sage Weil giving two presentations in November 2006, one at USENIX OSDI 2006[36] and another at SC'06.[37]

After his graduation in autumn 2007, Weil continued to work on Ceph full-time, and the core development team expanded to include Yehuda Sadeh Weinraub and Gregory Farnum. On March 19, 2010, Linus Torvalds merged the Ceph client into Linux kernel version 2.6.34[38][39] which was released on May 16, 2010. In 2012, Weil created Inktank Storage for professional services and support for Ceph.[40][41]

In April 2014, Red Hat purchased Inktank, bringing the majority of Ceph development in-house to make it a production version for enterprises with support (hotline) and continuous maintenance (new versions).[42]

In October 2015, the Ceph Community Advisory Board was formed to assist the community in driving the direction of open source software-defined storage technology. The charter advisory board includes Ceph community members from global IT organizations that are committed to the Ceph project, including individuals from Red Hat, Intel, Canonical, CERN, Cisco, Fujitsu, SanDisk, and SUSE.[43]

In November 2018, the Linux Foundation launched the Ceph Foundation as a successor to the Ceph Community Advisory Board. Founding members of the Ceph Foundation included Amihan, Canonical, China Mobile, DigitalOcean, Intel, OVH, ProphetStor Data Services, Red Hat, SoftIron, SUSE, Western Digital, XSKY Data Technology, and ZTE.[44]

In March 2021, SUSE discontinued its Enterprise Storage product incorporating Ceph in favor of Rancher's Longhorn,[45] and the former Enterprise Storage website was updated stating "SUSE has refocused the storage efforts around serving our strategic SUSE Enterprise Storage Customers and are no longer actively selling SUSE Enterprise Storage."[46]

Release history

[edit]| Name | Release | First release | End of life |

Milestones |

|---|---|---|---|---|

| Argonaut | 0.48 | July 3, 2012 | First major "stable" release | |

| Bobtail | 0.56 | January 1, 2013 | ||

| Cuttlefish | 0.61 | May 7, 2013 | ceph-deploy is stable | |

| Dumpling | 0.67 | August 14, 2013 | May 2015 | namespace, region, monitoring REST API |

| Emperor | 0.72 | November 9, 2013 | May 2014 | multi-datacenter replication for RGW |

| Firefly | 0.80 | May 7, 2014 | April 2016 | erasure coding, cache tiering, primary affinity, key/value OSD backend (experimental), standalone RGW (experimental) |

| Giant | 0.87 | October 29, 2014 | April 2015 | |

| Hammer | 0.94 | April 7, 2015 | August 2017 | |

| Infernalis | 9.2.0 | November 6, 2015 | April 2016 | |

| Jewel | 10.2.0 | April 21, 2016 | 2018-06-01 | Stable CephFS, experimental OSD back end named BlueStore, daemons no longer run as the root user |

| Kraken | 11.2.0 | January 20, 2017 | 2017-08-01 | BlueStore is stable, EC for RBD pools |

| Luminous | 12.2.0 | August 29, 2017 | 2020-03-01 | pg-upmap balancer |

| Mimic | 13.2.0 | June 1, 2018 | 2020-07-22 | snapshots are stable, Beast is stable, official GUI (Dashboard) |

| Nautilus | 14.2.0 | March 19, 2019 | 2021-06-01 | asynchronous replication, auto-retry of failed writes due to grown defect remapping |

| Octopus | 15.2.0 | March 23, 2020 | 2022-06-01 | |

| Pacific | 16.2.0 | March 31, 2021[47] | 2023-06-01 | |

| Quincy | 17.2.0 | April 19, 2022[48] | 2024-06-01 | auto-setting of min_alloc_size for novel media |

| Reef | 18.2.0 | Aug 3, 2023[49] | ||

| Squid | TBA | TBA |

Available platforms

[edit]While basically built for Linux, Ceph has been also partially ported to Windows platform. It is production-ready for Windows Server 2016 (some commands might be unavailable due to lack of UNIX socket implementation), Windows Server 2019 and Windows Server 2022, but testing/development can be done also on Windows 10 and Windows 11. One can use Ceph RBD and CephFS on Windows, but OSD is not supported on this platform.[50][5][51]

There is also FreeBSD implementation of Ceph.[4]

Etymology

[edit]The name "Ceph" is a shortened form of "cephalopod", a class of molluscs that includes squids, cuttlefish, nautiloids, and octopuses. The name (emphasized by the logo) suggests the highly parallel behavior of an octopus and was chosen to associate the file system with "Sammy", the banana slug mascot of UCSC.[17] Both cephalopods and banana slugs are molluscs.

See also

[edit]- BeeGFS

- Distributed file system

- Distributed parallel fault-tolerant file systems

- Gfarm file system

- GlusterFS

- IBM General Parallel File System (GPFS)

- Kubernetes

- LizardFS

- Lustre

- MapR FS

- Moose File System

- OrangeFS

- Parallel Virtual File System

- Quantcast File System

- RozoFS

- Software-defined storage

- XtreemFS

- ZFS

- Comparison of distributed file systems

References

[edit]- ^ "Ceph Community Forms Advisory Board". 2015-10-28. Archived from the original on 2019-01-29. Retrieved 2016-01-20.

- ^ https://github.com/ceph/ceph/releases/tag/v18.2.0. Retrieved 26 August 2023.

{{cite web}}: Missing or empty|title=(help) - ^ "GitHub Repository". GitHub.

- ^ a b "FreeBSD Quarterly Status Report".

- ^ a b "Installing Ceph on Windows". Ceph. Retrieved 2 July 2023.

- ^ "LGPL2.1 license file in the Ceph sources". GitHub. 2014-10-24. Retrieved 2014-10-24.

- ^ Nicolas, Philippe (2016-07-15). "The History Boys: Object storage ... from the beginning". The Register.

- ^ Jeremy Andrews (2007-11-15). "Ceph Distributed Network File System". KernelTrap. Archived from the original on 2007-11-17. Retrieved 2007-11-15.

- ^ "Ceph Clusters". CERN. Retrieved 12 November 2022.

- ^ "Ceph Operations at CERN: Where Do We Go From Here? - Dan van der Ster & Teo Mouratidis, CERN". YouTube. 24 May 2019. Retrieved 12 November 2022.

- ^ Dorosz, Filip (15 June 2020). "Journey to next-gen Ceph storage at OVHcloud with LXD". OVHcloud. Retrieved 12 November 2022.

- ^ "CephFS distributed filesystem". OVHcloud. Retrieved 12 November 2022.

- ^ "Ceph - Distributed Storage System in OVH [en] - Bartłomiej Święcki". YouTube. 7 April 2016. Retrieved 12 November 2022.

- ^ "200 Clusters vs 1 Admin - Bartosz Rabiega, OVH". YouTube. 24 May 2019. Retrieved 15 November 2022.

- ^ D'Atri, Anthony (31 May 2018). "Why We Chose Ceph to Build Block Storage". DigitalOcean. Retrieved 12 November 2022.

- ^ "Ceph Tech Talk: Ceph at DigitalOcean". YouTube. 7 October 2021. Retrieved 12 November 2022.

- ^ a b c M. Tim Jones (2010-06-04). "Ceph: A Linux petabyte-scale distributed file system" (PDF). IBM. Retrieved 2014-12-03.

- ^ "BlueStore". Ceph. Retrieved 2017-09-29.

- ^ "BlueStore Migration". Archived from the original on 2019-12-04. Retrieved 2020-04-12.

- ^ "Ceph Manager Daemon — Ceph Documentation". docs.ceph.com. Archived from the original on June 6, 2018. Retrieved 2019-01-31. archive link Archived June 19, 2020, at the Wayback Machine

- ^ Jake Edge (2007-11-14). "The Ceph filesystem". LWN.net.

- ^ Anthony D'Atri, Vaibhav Bhembre (2017-10-01). "Learning Ceph, Second Edition". Packt.

- ^ Sage Weil (2017-08-29). "v12.2.0 Luminous Released". Ceph Blog.

- ^ "Hard Disk and File System Recommendations". ceph.com. Archived from the original on 2017-07-14. Retrieved 2017-06-26.

- ^ "BlueStore Config Reference". Archived from the original on July 20, 2019. Retrieved April 12, 2020.

- ^ "10th International Conference "Distributed Computing and Grid Technologies in Science and Education" (GRID'2023)". JINR (Indico). 2023-07-03. Retrieved 2023-08-09.

- ^ "Ceph Dashboard". Ceph documentation. Retrieved 11 April 2023.

- ^ Gomez, Pedro Gonzalez (23 February 2023). "Introducing the new Dashboard Landing Page". Retrieved 11 April 2023.

- ^ "Operating Ceph from the Ceph Dashboard: past, present and future". YouTube. 22 November 2022. Retrieved 11 April 2023.

- ^ Just, Sam (18 January 2021). "Crimson: evolving Ceph for high performance NVMe". Red Hat Emerging Technologies. Retrieved 12 November 2022.

- ^ Just, Samuel (10 November 2022). "What's new with Crimson and Seastore?". YouTube. Retrieved 12 November 2022.

- ^ "Crimson: Next-generation Ceph OSD for Multi-core Scalability". Ceph blog. Ceph. 7 February 2023. Retrieved 11 April 2023.

- ^ Sage Weil (2007-12-01). "Ceph: Reliable, Scalable, and High-Performance Distributed Storage" (PDF). University of California, Santa Cruz. Archived from the original (PDF) on 2017-07-06. Retrieved 2017-03-11.

- ^ Gary Grider (2004-05-01). "The ASCI/DOD Scalable I/O History and Strategy" (PDF). University of Minnesota. Retrieved 2019-07-17.

- ^ Dynamic Metadata Management for Petabyte-Scale File Systems, SA Weil, KT Pollack, SA Brandt, EL Miller, Proc. SC'04, Pittsburgh, PA, November, 2004

- ^ "Ceph: A scalable, high-performance distributed file system," SA Weil, SA Brandt, EL Miller, DDE Long, C Maltzahn, Proc. OSDI, Seattle, WA, November, 2006

- ^ "CRUSH: Controlled, scalable, decentralized placement of replicated data," SA Weil, SA Brandt, EL Miller, DDE Long, C Maltzahn, SC'06, Tampa, FL, November, 2006

- ^ Sage Weil (2010-02-19). "Client merged for 2.6.34". ceph.newdream.net. Archived from the original on 2010-03-23. Retrieved 2010-03-21.

- ^ Tim Stephens (2010-05-20). "New version of Linux OS includes Ceph file system developed at UCSC". news.ucsc.edu.

- ^ Bryan Bogensberger (2012-05-03). "And It All Comes Together". Inktank Blog. Archived from the original on 2012-07-19. Retrieved 2012-07-10.

- ^ Joseph F. Kovar (July 10, 2012). "The 10 Coolest Storage Startups Of 2012 (So Far)". CRN. Retrieved July 19, 2013.

- ^ Red Hat Inc (2014-04-30). "Red Hat to Acquire Inktank, Provider of Ceph". Red Hat. Retrieved 2014-08-19.

- ^ "Ceph Community Forms Advisory Board". 2015-10-28. Archived from the original on 2019-01-29. Retrieved 2016-01-20.

- ^ "The Linux Foundation Launches Ceph Foundation To Advance Open Source Storage". 2018-11-12.[permanent dead link]

- ^ "SUSE says tschüss to Ceph-based enterprise storage product – it's Rancher's Longhorn from here on out".

- ^ "SUSE Enterprise Software-Defined Storage".

- ^ Ceph.io — v16.2.0 Pacific released

- ^ Ceph.io — v17.2.0 Quincy released

- ^ Flores, Laura (6 August 2023). "v18.2.0 Reef released". Ceph Blog. Retrieved 26 August 2023.

- ^ "Ceph for Windows". Cloudbase Solutions. Retrieved 2 July 2023.

- ^ Pilotti, Alessandro. "Ceph on Windows". YouTube. Retrieved 2 July 2023.

Further reading

[edit]- M. Tim Jones (2010-05-04). "Ceph: A Linux petabyte-scale distributed file system". DeveloperWorks > Linux > Technical Library. Retrieved 2010-05-06.

- Jeffrey B. Layton (2010-04-20). "Ceph: The Distributed File System Creature from the Object Lagoon". Linux Magazine. Archived from the original on April 23, 2010. Retrieved 2010-04-24.

{{cite journal}}: CS1 maint: unfit URL (link) - Carlos Maltzahn; Esteban Molina-Estolano; Amandeep Khurana; Alex J. Nelson; Scott A. Brandt; Sage Weil (August 2010). "Ceph as a scalable alternative to the Hadoop Distributed File System". ;login:. 35 (4). Retrieved 2012-03-09.

- Martin Loschwitz (April 24, 2012). "The RADOS Object Store and Ceph Filesystem". HPC ADMIN Magazine. Retrieved 2012-04-25.